nsx

-

NSX Distributed Firewall – How To Get Started?

One of the great benefits of the NSX Distributed Firewall (DFW) is the flexibility it offers when it comes to developing security policy models. Implementation of the application intrinsic NSX DFW always begins with looking at the business needs and… Continue reading

-

Quick Tip – Ansible Module “nsxt_rest”

There are Ansible modules for configuring most of the NSX-T platform components, but for certain configuration tasks it might be quicker (or even necessary) to GET/POST/PUT/PATCH/DELETE to the NSX-T REST API directly. Now, in those situations you could use curl… Continue reading

-

Log Insight – Integration With Jenkins

During some research I did for a customer on how to trigger an action based on an error event in the SDDC, I built myself a lab and ended up with a concept that seems interesting enough to write some… Continue reading

-

Around the NSX-T Table(s)

The NSX-T Central Control Plane (CCP) is building and maintaining a central repository for some tables that make NSX-T the unique network virtualization solution it is. More specifically I’m talking about: The Global MAC address table The Global ARP table… Continue reading

-

Configuring NSX-T 3.0 Stretched Networking

NSX-T 3.0 comes with brand new features for logical networking in multisite environments. With NSX-T Federation the platform effectively receives a location-aware management, control, and data plane and this gives us, the implementers and architects, some very interesting new options… Continue reading

-

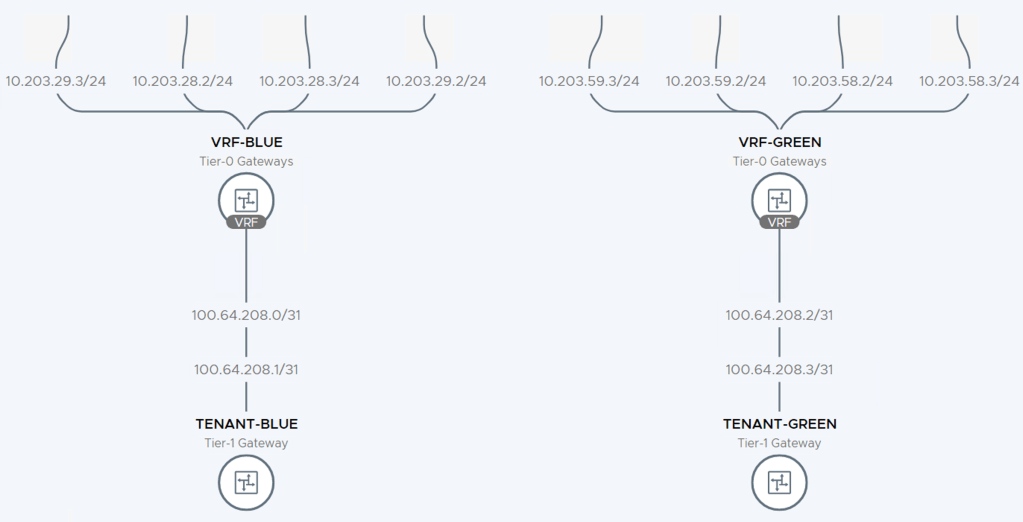

Setting up VRF Lite in NSX-T 3.0

NSX-T version 3.0 brings a new routing construct to the table: VRF Lite. With VRF Lite we are able to configure per tenant data plane isolation all the way up to the physical network. Creating dedicated tenant Tier-0 Gateways for… Continue reading

-

Nested vSphere and NSX-T Deployed With Ansible – April Update

Some weeks ago I introduced you to my GitHub repository containing a set of Ansible playbooks helping people deploy a highly customizable vSphere 6.7/7.0 with NSX-T 2.5/3.0 nested lab environment. As I mentioned in the “launch” post, this project is… Continue reading