vsphere

-

Integrating TKG Service Clusters with NSX Security

Organizations aiming to leverage NSX for securing their TKG Service Clusters (Kubernetes clusters) can now achieve this with relative ease. In this guide, I’ll walk you through configuring the integration between a TKG Service Cluster and NSX—a required step for… Continue reading

-

SDDC.Lab v6 Released

Slow and steady. That’s how I would describe the pace and progress around making SDDC.Lab version 6 the new default and recommended version of the project. If you’re not familiar with the SDDC.Lab project, it’s a collection of Ansible Playbooks that… Continue reading

-

Quick Tip: NSX Advanced Load Balancer for vSphere Tanzu with NSX Networking

As of NSX version 4.1.1, NSX Advanced Load Balancer version 22.1.4, and vSphere with Tanzu version 8.0 Update 2 we have the option to leverage the NSX Advanced Load Balancer as the load balancer provider for new vSphere with Tanzu… Continue reading

-

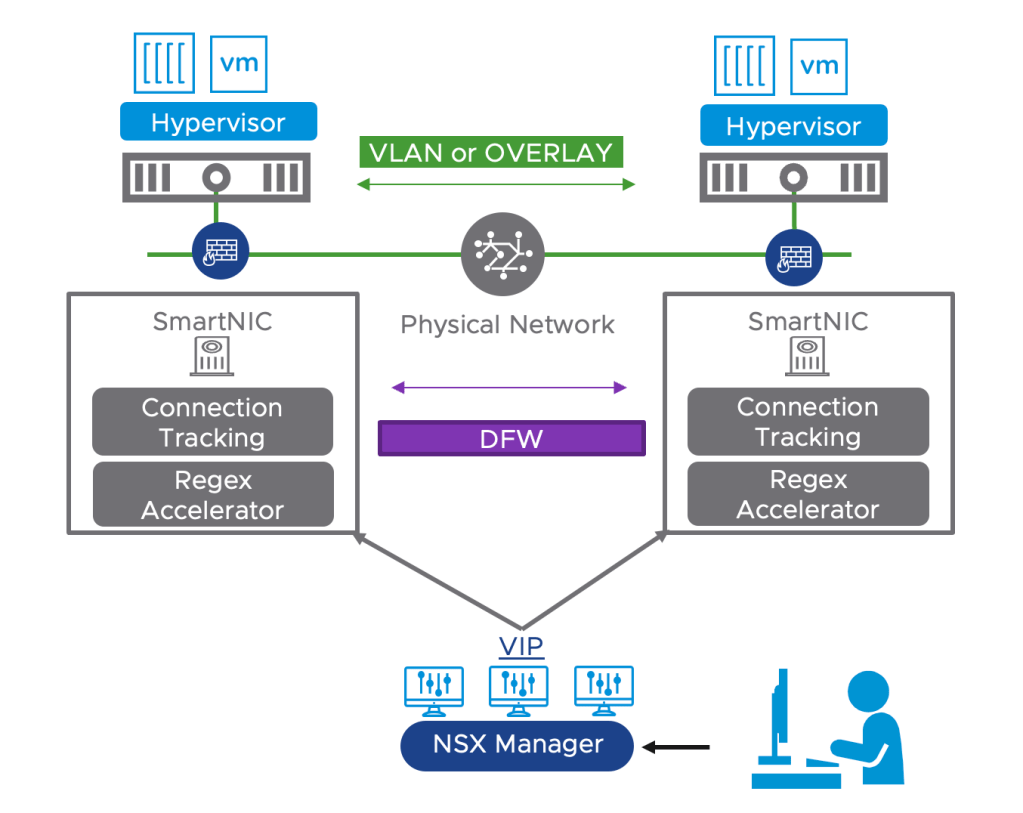

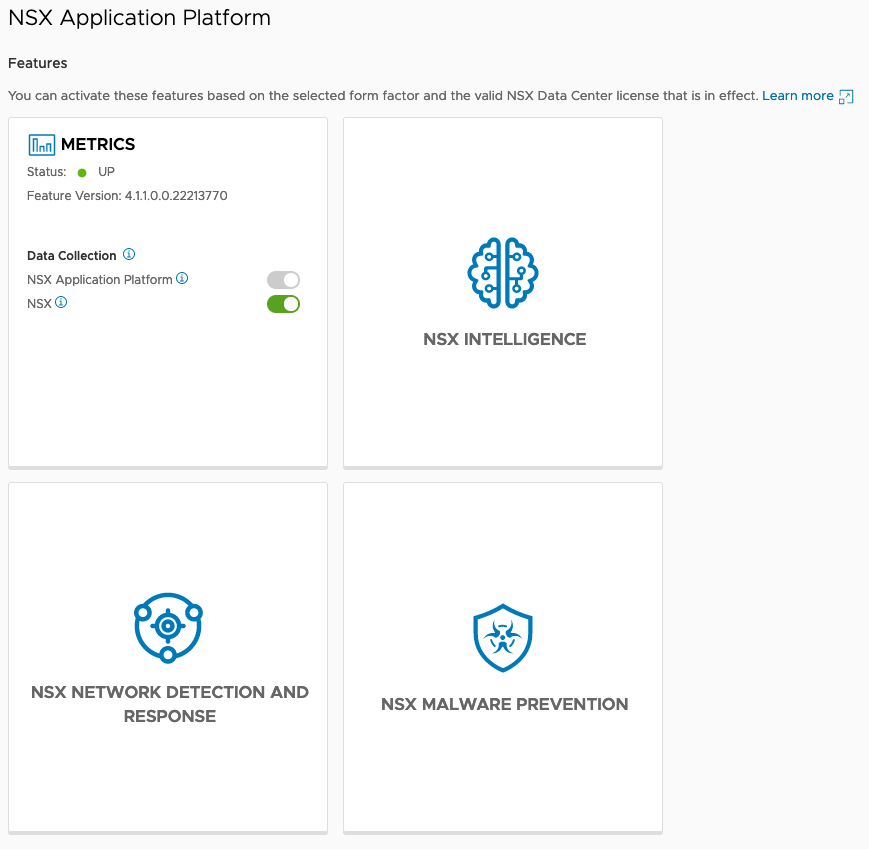

Configuring DPU-Based Acceleration for NSX

Offloading the NSX Distributed Firewall (DFW) to a Data Processing Unit (DPU) is an exciting new feature which is GA as of NSX version 4.1. Other NSX features that were already supported within DPU-based acceleration for NSX are: For NSX… Continue reading

-

SDDC.Lab v5 Released

Finishing touches and testing is completed. We’re proud to announce that we’ve just released SDDC.Lab Version 5! For those of you that are not familiar with the SDDC.Lab project, it’s a collection of Ansible Playbooks that perform fully automated deployments… Continue reading

-

SDDC.Lab v3

Last week we released version 3 of the SDDC.Lab project. For those of you who aren’t familiar with the project, it’s a set of Ansible scripts (Playbooks) that perform automated deployments of nested VMware SDDCs. An hour after you issue… Continue reading