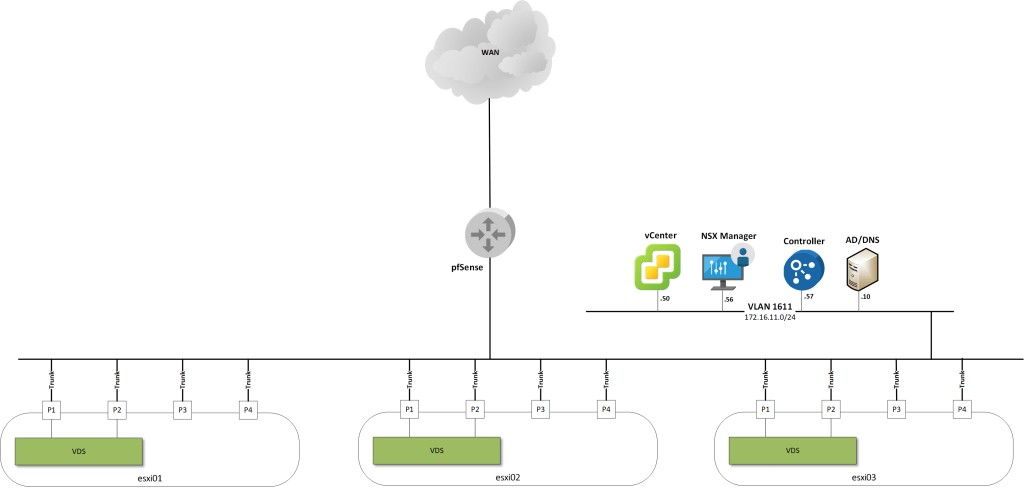

Welcome back! I’m in the process of installing NSX-T in my lab environment. So far I have deployed NSX Manager which is the central management plane component of the NSX-T platform.

Today I will continue the installation and add a NSX-T controller to the lab environment.

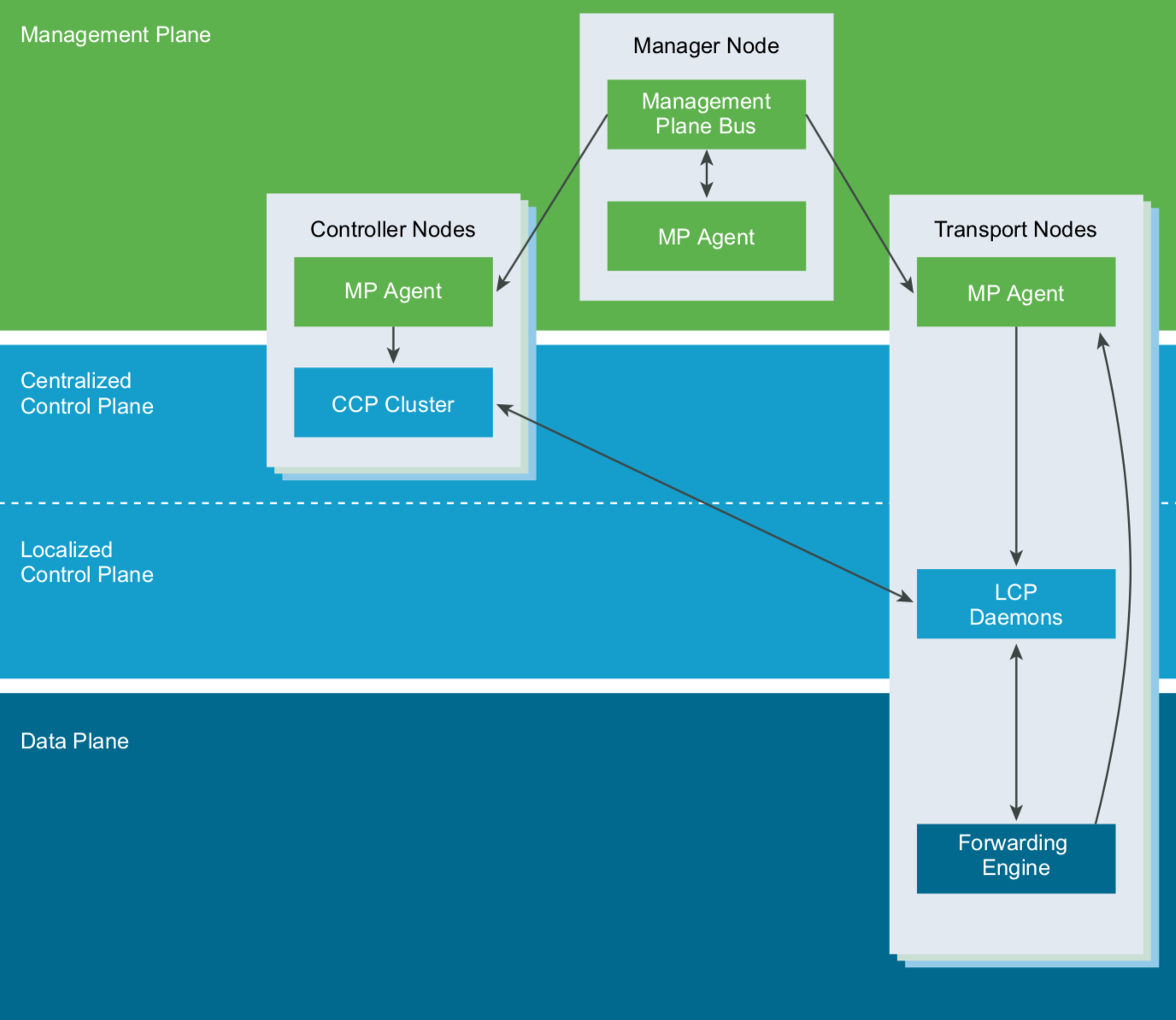

Control plane

The control plane in NSX is responsible for maintaining runtime state based on configuration from the management plane, providing topology information reported by the data plane, and pushing stateless configuration to the data plane.

With NSX the control plane is split in two parts, the central control plane (CCP) which runs on the controller cluster nodes, and the local control plane (LCP) which runs on the transport nodes. A transport node is basically a server participating in NSX-T networking.

Now that I’m mentioning these planes, here’s a good diagram showing the interaction between them

Deploying the controller cluster

In a production NSX-T environment the controller cluster must have three members (controller nodes). The controller nodes are placed on three separate hypervisor hosts to avoid a single point of failure. In a lab environment like this a single controller node in the CCP is acceptable.

First I’m adding a new DNS record for the controller node. This is not required, but a good practice imho.

| Name | IP address |

| nsxcontroller-01 | 172.16.11.57 |

An NSX-T controller node can be deployed in several ways. I added my vCenter system as a compute manager in part one, so perhaps the most convenient way is to deploy the controller node from the NSX Manager GUI. Other options include using the OVA package or the NSX Manager API.

I decided to deploy the controller node using the NSX Manager API. For this I need to prepare a small piece of JSON code that will be the body in the API call:

{

"deployment_requests": [

{

"roles": ["CONTROLLER"],

"form_factor": "SMALL",

"user_settings": {

"cli_password": "VMware1!",

"root_password": "VMware1!"

},

"deployment_config": {

"placement_type": "VsphereClusterNodeVMDeploymentConfig",

"vc_id": "bc5e0012-662f-421c-a286-7b408302bf15",

"management_network_id": "dvportgroup-44",

"hostname": "nsxcontroller-01",

"compute_id": "domain-c7",

"storage_id": "datastore-41",

"default_gateway_addresses":[

"172.16.11.253"

],

"management_port_subnets":[

{

"ip_addresses":[

"172.16.11.57"

],

"prefix_length":"24"

}

]

}

}

],

"clustering_config": {

"clustering_type": "ControlClusteringConfig",

"shared_secret": "VMware1!",

"join_to_existing_cluster": false

}

}

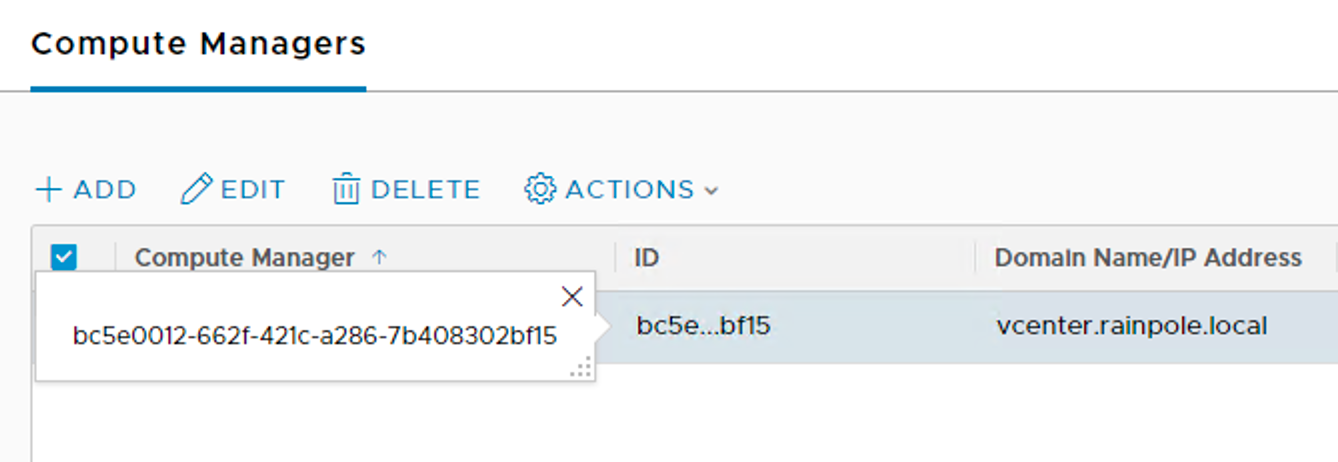

This code instructs the NSX Manager API to deploy one NSX-T controller node with a small form factor. Most things in the code above are pretty self-explanatory. I fetched the values for “management_network_id”, “compute_id”, and “storage_id” from the vCenter managed object browser (https://vcenter.rainpole.local/mob). The “vc_id” is found in NSX Manager under “Fabric” > “Compute Managers” under the “ID” column:

I use Postman to send the REST POST to “https://nsxmanager.rainpole.local/api/v1/cluster/nodes/deployments”:

This initiates the controller node deployment which can be tracked in the NSX Manager GUI under “System” > “Components”:

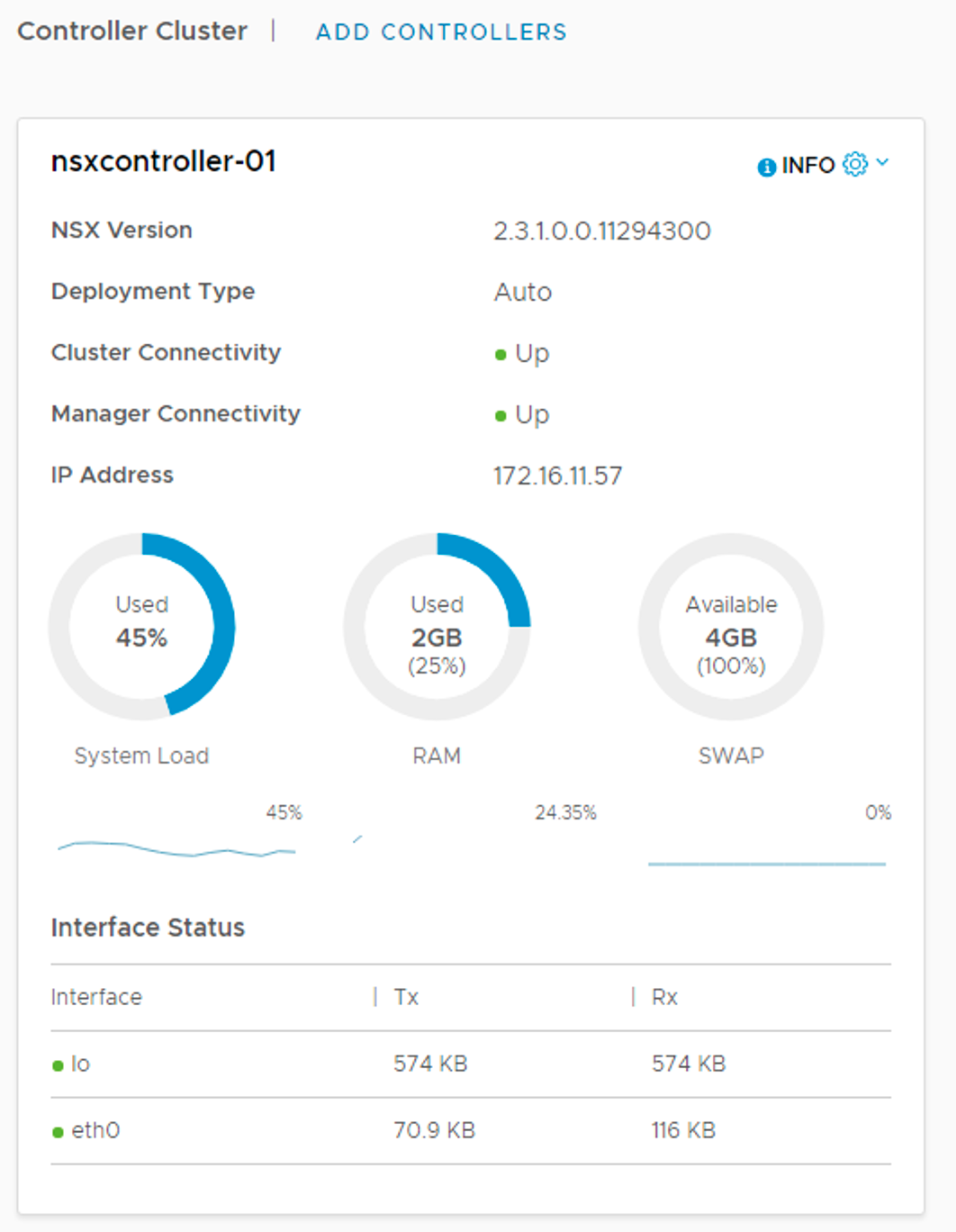

Once the deployment is finished it should look something like this:

Both cluster connectivity and manager connectivity are showing “Up”. I run the following command from the controller node CLI to verify the status:

get control-cluster status

To verify that NSX Manager knows about this new controller node I run the following command from the NSX Manager CLI:

get nodes

That looks good too. Another useful command is:

get management-cluster status

It shows the status of the management cluster and the control cluster.

I also configure DNS and NTP on the new controller node from the controller node CLI:

set name-servers 172.16.11.10

set ntp-server ntp.rainpole.local

Conclusion

I have the controller “cluster” up and running. With only one controller node it isn’t much of a cluster, but it will do for lab purposes.

The management plane and control plane are now operational. In the next part I will continue with installing the data plane components.

Leave a comment